During each World Wide Developer Conference keynote, app developers all over the world are fearing their app may get sherlocked by Apple. This has happened to flashlight apps when Apple introduced a simple toggle in the control center, to f.lux when Apple added exactly the same functionality to macOS and iOS, in parts to Dropbox with the launch of iCloud Drive and to many other developers. This year there was only one obvious of such things, the Workflow app which was acquired a few month ago and instantly turned into Siri Shortcuts by Apple. So no real ‘sherlocking’. But when we skimmed the newest APIs, release notes and session descriptions after the keynote, we found out that the technology behind our Portrait by img.ly app might have gotten sherlocked by the Core Image team. The reason is perfectly summarized in this tweet:

“Portrait Segmentation API

— Preshit Deorukhkar (@preshit) June 4, 2018

A new API for third-party developers allows for the separation of layers in a photo, such as separating the background from the foreground.”

Hi @halidecamera

Quick spoiler: While Core Images new API is impressive, our technology is still ahead in terms of general availability, required hardware and cross-platform compatibility. But let’s start from the beginning:

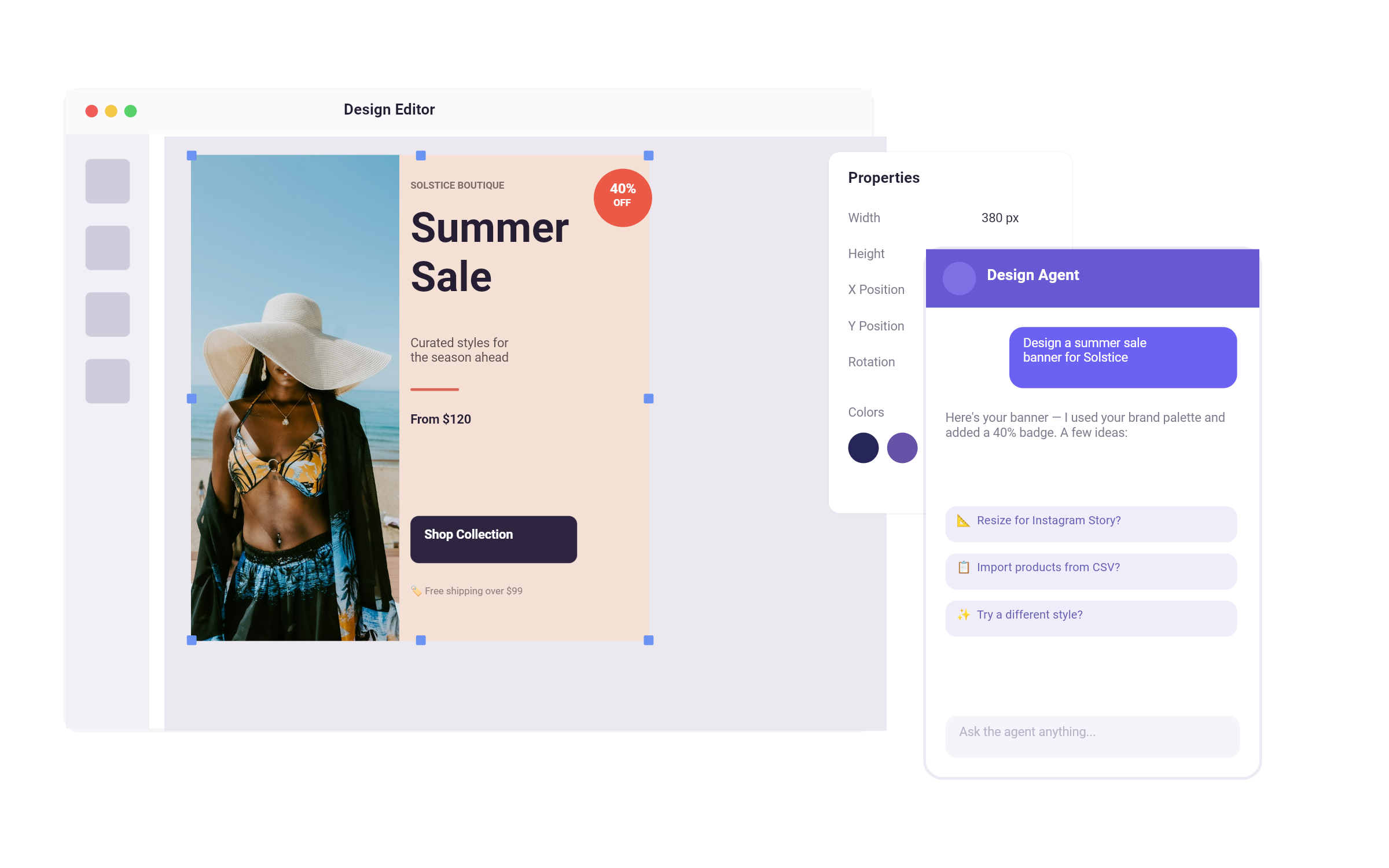

We started working on automated image segmentation in 2016 and decided to focus on portraits in 2017. After spending most of the year on building a custom deep learning model, running hundreds of experiments and tweaking our post processing pipeline, we finally released the Portrait app in fall 2017. The app is able to generate portrait segmentations in real-time and allows the user to take a nice selfie, which is then automatically stylized using the segmentation mask and some post processing. This allows for sophisticated effects and got great reception all over the world. The app even got featured multiple times in many different countries. Recently we started looking into inferring depth, as we wanted to bring depth based features to our PhotoEditor SDK, but needed support on more iPhone devices, Android and especially required depth data for existing images.

Reading the tweet above, it looked like Apple just added the Portrait apps core functionality to the Core Image framework, making it available to all developers and users running or targeting iOS 12. A little worried about the new competition, we immediately looked into the docs and eagerly waited for the session on last Thursday to see if we’d soon need to find a new unique way of using technology to empower creativity.

We found out that all you need to do in order to get a segmentation mask along with your image is toggle the following flags when requesting a photo capture from your AVCapturePhotoOutput:

let settings: AVCapturePhotoSettings = AVCapturePhotoSettings()

settings.isDepthDataDeliveryEnabled = true

settings.isPortraitEffectsMatteDeliveryEnabled = true

...After the photo is captured, you’re then able to extract the matte in the didFinishProcessingPhoto callback. And voila, you now have the captured image, a depth map and the mask separating fore- and background available:

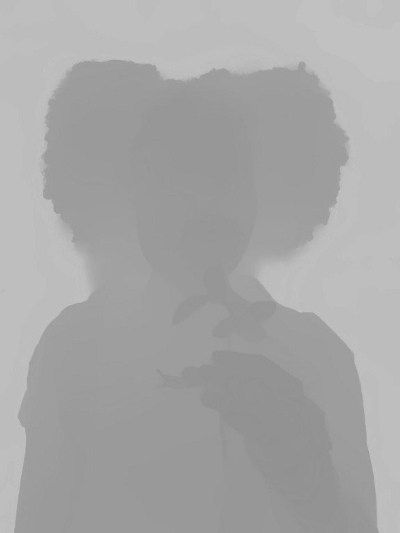

Of course, we quickly spun up our own models and algorithms and compared the portrait matte, as well as the depth map to Apples results. As there is no way to rerun Apples matting algorithm for an existing image, we took one of Apples samples and compared their mask with results from our model:

While it may not have been the greatest idea to pick Apples shiny developer example, Apples mask is a little more detailed and for this particular image our model missed parts of the neck on the left. But overall we were still very happy with our results, especially when considering, that everything was generated from the plain image and didn’t require any dedicated hardware like dual cameras or a TrueDepth sensor. We did of course expect a superior depth map from Apple, as we’re clearly lacking data and are still actively working on the depth model used to generate the image above. But Apples depthmap interestingly has some issues around the neck as well, despite their use of a dual camera system. And keep in mind, that our results were all processed on the mobile device, entirely based on the RGB data contained in the image, and could be repeated on an Android phone, older iOS devices and even your browser.

When we wanted to try more samples, we quickly noticed the major limitations of Apples API: As the portrait matte capture is only available in combination with depth data, it’s limited to the iPhone 7 Plus, 8 Plus and iPhone X. And, most important to us, portrait mattes taken using the front camera are exclusively limited to the iPhone X and it’s TrueDepth sensor array. So for our Portrait app, switching to the new API would require us to ditch our live preview and go iPhone X and iOS 12 only. This was enough to calm our minds and we started thinking deeper about Apples technology:

While the depth requirement is annoying, it also explains the higher quality of Apples predictions. Our model is trained to solve the individual problems of portrait matting and depth map inference as a whole, but Apple is able to focus on improving the edges, while the ‘rough’ foreground/background segmentation is handled by masking based on the depth of the face detected within the image. We might be able to combine both models as well, but that would make the segmentation model currently used in the Portrait app obsolete and would most certainly kill the real-time functionality.

Overall, Apples technology is, as almost always, pretty impressive and the portrait matte generation works flawless, but we can now say, that for the use within our app, we reach a good quality with our current algorithms and think that a real-time preview is more important to our users. For our PhotoEditor SDK we’d happily use the high fidelity maps generated by iOS 12, but the hardware requirements are not yet suitable for SDK deployment. Our algorithms on the other hand, neither require a depth map, nor are we limited to processing after the image was captured, but can perform real-time inference on devices as old as the iPhone 6S. And the best of all: Once a TrueDepth camera is more common and everyone is running iOS 12, we can still use the Portrait Segmentation API and enjoy it’s simplicity.

Thanks for reading! To stay in the loop, subscribe to our Newsletter.